From Modern to Quantum Cryptography in 30 Minutes

BLOG: Heidelberg Laureate Forum

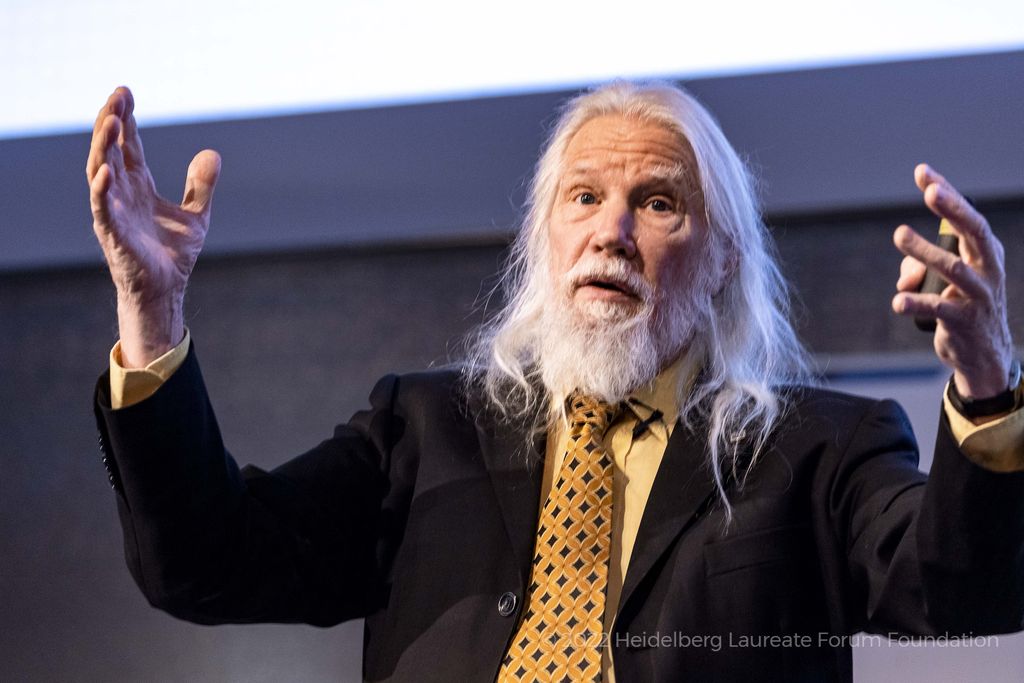

Whitfield Diffie. © Heidelberg Laureate Forum Foundation / Flemming

On the final day of Heidelberg Laureate Forum 2022, we were treated to a panel discussion on the importance of post-quantum cryptography. The panel included some of the leading names in cryptography and computer science: Vinton Cerf, Whitfield Diffie and Adi Shamir.

Quantum cryptography, however, can be a heavy topic to drive straight into. Before the panel commenced, Whitfield Diffie gave an introduction to the subject, bringing the audience up to speed on some of the key milestones in the history of cryptography.

The very beginning

Whitfield Diffie began his exposition by guiding us through the initial developments of modern cryptography. Cryptography has many advantages, for example it can ensure that messages can be sent to friends with the security that even if enemies do intercept the messages, they can’t read them. In some cases, it’s also possible to encrypt things in such a way that it is possible to detect if a message has been tampered with.

In Diffie’s view, modern cryptography has two main origins: Al-Kindi in Baghdad about 1200 years ago, and Leon Batista Alberti in Italy around 500 years ago. Both of these scientists realised that in order to improve the cryptographic methods of the time, they needed to use something called a polyalphabetic cipher.

Polyalphabetic ciphers use substitution, much like the famous Caesar shift cipher, where each letter is replaced by one a fixed number of steps along in the alphabet. However, what sets polyalphabetic ciphers apart from others is that the substitution they use changes with each character. An example of this is the enigma machine, one of the most well-known instances of cryptographic technology.

Diffie gave an overview of how encryption systems have changed over time: Huge leaps in technology were made in WWI due to the development of radios, and in WWII due to steps forward in electronics. We also saw surges in computing technology in the 60s as well as software engineering in the 80s. Cryptography in the later half of the 20th century was characterised by the move from stream ciphers – encrypting one character at a time – to block ciphers, which can parallelise to encrypt 64 or even 128 characters at once.

Modern Cryptography

This brings us to cryptography as we know it today. One of the big challenges of cryptography is key management. Keys are information that can be used to encode and decode messages. There are many challenges when working with keys – including storage and distribution – and these challenges are increased by orders of magnitude if the keys must be kept secret.

The solution to this is public-key cryptography, where the keys are made publicly available. This allows parties to openly negotiate keys, and for parties to “digitally sign” messages, so their authenticity can be proven.

I want to demonstrate how two of the main public-key cryptosystems work, but first we are going to have to do a bit of learning.

Modular Arithmetic

Modular arithmetic is a powerful tool in number theory, and is something you will probably have been doing for a large portion of your life, without even realising it! Suppose it’s \(7\)am, and I ask you what the time is in \(8\) hours time. You might say \(1500\) hours, but what you’ll probably say is \(3\)pm. What you would have done, without even realising it, is given me the remainder when you divide the time by \(12\).

Modular arithmetic looks at the remainders when we divide by a given number \(n\), which we call the modulus. \(a\) and \(b\) are equivalent modulo \(n\) if they give the same remainder when divided by \(n\). We write this as \(a \equiv b \) (mod \(n\)).

The reason this is so powerful is that addition, subtraction, multiplication, and exponentiation all work in much the same way. The only difference is that the numbers loop round, just as they do with the clock. For example, \( 7 \times 3 = 21 \) but if we work modulo \( 8\), then we see that \( 7 \times 3 \equiv 5 \) (mod \(n\)).

This looping property is useful in cryptography as it means we can, in some situations, start with a message and my repeated exponentiation end up with the same message. An example of this in use is in the RSA algorithm below. It’s also useful as it means that we can carry out several mathematical operations that in normal circumstances would result in a number which is very large to store and send. Modular arithmetic gives us an upper bound for how large numbers can get.

Diffie-Hellman Key Negotiation

One method that can be employed to publicly but securely exchange cryptographic keys is the Diffie-Hellman protocol, named after Whitfield Diffie and Martin Hellman (yes, the same Whitfield Diffie who gave the talk). This was the work that won Diffie his 2015 ACM A.M. Turing Award.

Let’s say Alice and Bob want to be able to communicate (Alice and Bob being the famous, often-used example names in cryptography). They publicly agree on a prime number \(p\), and an integer \(g\) which is coprime to \(p\).

Alice then chooses a secret integer \(a\) and sends Bob \(A = g^a\) (mod \(p\)). Bob uses this to compute \(s = A^b\) (mod \(p\)).

Meanwhile, Bob chooses a secret integer \(b\) and sends Alice \(B = g^b\) (mod \(p\)). Alice uses this to compute \(s = B^a\) (mod \(p\)).

Alice and Bob exchange information

It’s important to note here that these are the same \(s\): \(A^b \equiv {(g^a)^{b}} \equiv g^ab \equiv {(g^b)^{a}} \equiv B^a\) (mod \(p\)).

Now Alice and Bob have a shared key that nobody else is able to easily calculate. They can use this key to encrypt and decrypt messages however they like, without having any clue what secret integer each other chose.

The important thing here is that in modular arithmetic (and also in general) exponentiation is relatively easy to do, but it’s inverse (finding logarithms) is hard.

The RSA Algorithm

One of the oldest public-key cryptosystems is RSA, named after Ron Rivest, Adi Shamir, and Leonard Adleman (yes, the same Adi Shamir who was on the panel). This was the work that won Shamir his 2002 ACM A.M. Turing Award.

Suppose Alice wants to receive a message from Bob. First, Alice randomly chooses two large prime numbers \(p\) and \(q\). She then computes \(n = pq\).

At this point, Alice does a bit of number theory. It gets a bit fiddly so I’ll leave interested readers to do their own research. The outcome of this is that Alice uses \(n\) to find two numbers, \(d\) and \(e\), with the property that, for any integer \(x\), \( (x^e)^d \equiv x (\text{ mod } n)\).

Alice then publishes \(n\) and \(e\), but keeps \(d\) a secret. \((n,e)\) is known as her public key, and \((n, d)\) is her private key.

Alice shares her key

Now it’s Bob’s turn to send his message. Let’s say Bob’s message is some \(m\) with \(0 \leq m < n\). Using Alice’s public key, Bob calculates \(c \equiv m^e\) (mod \(n)\) and broadcasts the ciphertext \(c\).

Now Alice can take Bob’s message and decrypt it by calculating \(c^d \equiv (m^e)^d \equiv m\) (mod \(n\)). Due to the careful choosing of \(d\) and \(e\), this calculation returns the original message \(n\)!

The crucial thing to notice here, is that the whole algorithm relies on the fact that multiplication is much easier than factorisation. \(d\) cannot be obtained without factorising \(n\). Bob can use \(n\) and \(e\) to encrypt his message, but without \(d\), he has no way of being able to decrypt any message. Hence Alice can be safe in the knowledge that nobody else can read the message.

This is the cryptographic equivalent of sending somebody else your padlock to put on a case so that they can return it using a public postal service, confident that only you will be able to open the case.

Quantum Cryptography

Now that we know the algorithms used in modern encryption, we can understand a bit about how quantum computers make them significantly less secure.

To describe how quantum computing breaks public keys, Whitfield Diffie used the analogy of a race track where it’s easy to go forward, but very difficult to go backward. This race track could be thought of as analogous to the exponentiation modulo \(n\), for example.

However, if you know the length of the track, instead of going backwards you can loop round the correct distance and end up where you need to be. But this relies on knowing exactly how far forward you need to go. The length of the track in this analogy is equivalent to knowing someone’s private keys.

Quantum computing is incredibly parallelisable. Each qubit (quantum bit) is in a superposition of states. While classical bits can be either \(0\) or \(1\), qubits are a superposition of the two. When qubits are combined, the number of states grows exponentially and allows many calculations to be run simultaneously. As a result, processes that would typically take so long as to be deemed impossible in practise (even if not theoretically impossible), are now very much possible.

What this means is that quantum computing can perform calculations much more quickly than traditional computers. Modern cryptographic algorithms rely on the fact that it’s not impossible to break the encryption, but would take more computer time than the age of the universe. But quantum computers are so fast that this becomes meaningless – quantum computing can decrypt these messages.

Whitfield Diffie. © Heidelberg Laureate Forum Foundation / Flemming

What happens next?

Currently, quantum computers capable of anything like the calculations needed for decryption are a thing of the future. Our messages are safe for now. However, should they become a reality, it would be possible to factorise prime numbers incredibly quickly and foundations on which modern cryptography is built would crumble.

Mathematicians and computer scientists are working to find quantum-resistant methods of encryption. We’ve just got to hope they do so before it’s too late.

The full panel discussion, along with the entire scientific program of the 9th HLF, can be viewed on the HLFF YouTube channel.

Sophie Maclean wrote (28. Oct 2022):

> […] \(a\) and \(b\) are equivalent modulo \(n\) if they give the same remainder when divided by \(n\).

This equivalence (joint membership of \(a\) and \(b\) in one particular “arithmetic” equivalence class) is more specifically a.k.a. “\(a\) and \(b\) being congruent modulo \(n\)”; cmp. https://en.wikipedia.org/wiki/Congruence_relation#Basic_example

Note that if \(a\) and \(b\) leave the same remainder when divided by \(n\), neither \(a\) nor \(b\) themselves are necessarily equal to that same, common remainder.

> We write this as \(a \equiv b \text{ (mod } n\text{)} \).

That’s indeed a very common notation of the congruence relation; though certain notation variants may be found more advantageous cmp. https://math.stackexchange.com/a/1559911

> […] the Diffie-Hellman protocol […] Alice and Bob […] publicly agree on a prime number \(p\), and an integer \(g\) which is coprime to \(p\).

> Alice then chooses a secret integer \(a\)

Right.

> and sends Bob \( A = g^a \, \text{ (mod } p\text{)} \).

Here the expression \(g^a \, \text{ (mod } p\text{)} \) itself is (surely) supposed to stand for the one/unique remainder value which remains when dividing \(g^a\) by \(p\), a.k.a. carrying out “the modulo operation”; where

\( 0 \le g^a \, \text{ (mod } p\text{)} \lt p \) and

\(\exists \, k \in \mathbb Z \, | \, g^a = (k \, p) + \left( g^a \, \text{ (mod } p\text{)} \right) \).

Also, there are several other notations of the modulo operation available, some of which might be of more readily distinguishable appearance from the common notation of the congruence relation used above.

> Bob [… having chosen] a secret integer \(b\) […] uses this to compute \( s = A^b \, \text{ (mod } p\text{)} \).

Again, importantly, and as above, the expression \(A^b \, \text{ (mod } p\text{)} \) denotes the one/unique remainder value which remains when dividing \(A^b\) by \(p\).

> Meanwhile, Bob […] sends Alice \( B = g^b \, \text{ (mod } p\text{)} \) [and] Alice uses this to compute \( s = B^a \, \text{ (mod } p\text{)} \).

> It’s important to note here that these are the same \(s\):

This strikes my as a bit of an awkward formulation …

Sure, notably, there are two (separate, distinct) instances of \(s\) in the two (separate, distinct) corresponding equations above.

And thus arise some (important) questions:

“(Why) Is it justified to use the exact same symbol \(s\) in these two instances (outright)?”

(Some might have circumvented such a question being raised, by outright introducing distinct symbols \(s_A\) and \(s_B\) instead, for example …);

and:

“Are the two values which the variable \(s\) takes in these two instances, respectively, equal to each other?”

(An equivalent question could be asked about the value taken by the variable symbolized as \(s_A\), and the value taken by the variable symbolized as \(s_B\), if applicable …)

Either way — clearly:

\(\left(g^a\right)^b = g^{(a \, b)} = g^{(b \, a)} = \left(g^b\right)^a\),

and (a little more subtile), as a special case of the “Congruence Power Rule”:

\( \left(g^a \, \text{ (mod } p\text{)}\right)^b \equiv \left(g^a\right)^b \text{ (mod } p\text{)} \)

as well as

\( \left(g^b \, \text{ (mod } p\text{)}\right)^a \equiv \left(g^b\right)^a \text{ (mod } p\text{)} \).

Then together (pretty much as shown already in the above SciLogs article):

\(A^b \equiv \left(g^a\right)^b \equiv \left(g^b\right)^a \equiv B^a \text{ (mod } p\text{)} \).

Therefore also, finally:

\(A^b \, \text{ (mod } p\text{)} = s = B^a \text{ (mod } p\text{)}\).

p.s. — another less subtile issue:

> […] The RSA Algorithm […] Suppose Alice wants to receive a message from Bob. […]

> Let’s say Bob’s message is some \(m\) […]

> Now Alice can [… recover] the original message \(n\)! […]