Writing for mathematical clarity

BLOG: Heidelberg Laureate Forum

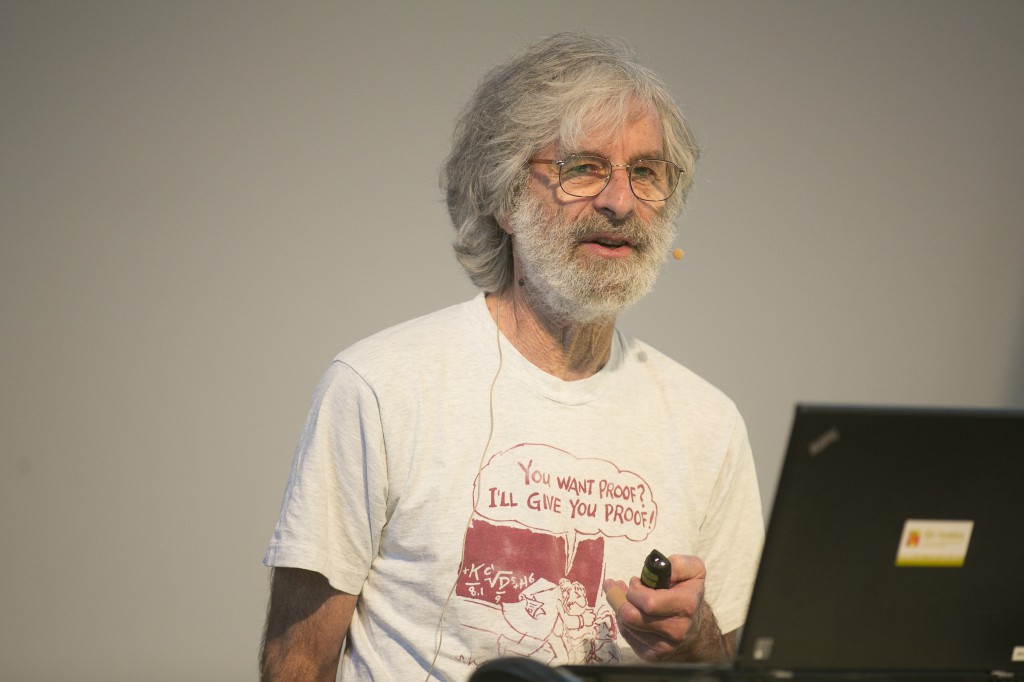

Yes, he does get that a lot. And translating freely from international body language, Leslie Lamport could well do without another unoriginal “Thank you” from a grateful LaTeX user. Comparatively few people, it seems, ask him about his real work.

Asking Leslie Lamport about LaTeX is akin to asking Peter Ustinov about playing Nero in “Quo Vadis”, or asking Alec Guinness about “Star Wars”. But fact is that LaTeX is what Lamport, and in particular his name’s first syllable, is widest known for.

When Donald Knuth (whom we’re unlikely to see at HLF) created the typesetting system TeX, part of what made the system powerful and elegant also made it somewhat difficult to use. LaTeX started out as a set of macros for TeX, created by Lamport in the 1980s for a book he was writing. Nowadays, LaTeX in its various incarnations are the de facto standard for document preparation in wide areas of science. Go on the arXiv – the largest server for electronic preprints in physics and astronomy, among other things – and you will find that LaTeX-written articles dominate; most physics and astronomy journals will ask potential authors to submit manuscripts already typeset in the journal’s specific LaTeX style, and most publishers will ask the same for text book manuscripts. This is why LaTeX is a household name among physics (etc.) students, postdocs and more senior researchers alike.

But LaTeX development has long been taken over by others, and in computer science, Lamport’s real work (which won him the 2013 Turing Award – hence his presence at HLF) is LaTeX-independent. It concerns distributed systems – think separate modules sending messages back and forth across a network, which can execute certain calculations independently from each other, in particular: concurrently. Some of Lamport’s work sits right on the boundary between the HLF’s two main subject areas: mathematics and computer science.

Take TLA, short for “Temporal Logic of Actions” – a formal language for specifying systems and the way they can change (e.g. step by step) over time (more info on Lamport’s TLA Homepage). His language TLA+ allows users to specify systems by defining their possible states and the way these states can change, using basic mathematics (in particular, boolean logic), and as little more than that as possible.

The use of this kind of formalization is summed up nicely in the introductory pages of Lamport’s book Specifying Systems, where one section is headed by a quote from the cartoonist Richard Guindon: “Writing is nature’s way of letting you know how sloppy your thinking is.” (I love this quote!) In the same vein, Lamport then continues: “Mathematics is nature’s way of letting you know how sloppy your writing is”, you can find some paragraphs later, and a bit later still, “Formal mathematics is nature’s way of letting you know how sloppy your mathematics is.”

You have to be neither Sapir nor Whorf to see where this is heading: A language that has clearly defined rules will cut down the number of sloppy mistakes than a language that allows for more imprecision. Back in the days, I’m told, the use of latin as the main language of science was sometimes justified in just that way.

This (like other applications of predicate logic) might seem like an overly formal way of dealing with real world systems. On the other hand, once a system becomes suitably complex and all our human short-cuts have become woefully insufficient, a formal description of the system, allowing for systematic (so rigidly simple, a computer can do it!) exploration of system behavior, might turn out to be the only way of making sure you are not overlooking anything important. It’s no accident that Amazon is using TLA+ to verify key properties of their core services – to make sure that the complex interaction of the various algorithms employed does not create nasty, and potentially costly, surprises.

While this comes under the heading of basic mathematics coming to the aid of computer science, Tuesday’s talk went in the opposite direction: It was all about how to write proofs in simple, structured ways, which make it more obvious when something has gone wrong. The proof structure proposed by Lamport is not difficult, and its elements – such as clearly separating the steps, listing the assumptions and previous steps that were used explicitly for each step – can successfully be implemented on their own.

I found Lamport’s examples and arguments quite convincing (and kept wondering how much of what he said would carry over to writing about physics and other sciences), and the feeling seemed to be quite wide-spread in the HLF audience.

Most of those present at today’s lecture are likely to think about Lamport’s way of writing proofs the next time they are faced with the task of writing about (or teaching) mathematics. But even so, it’s probably true that Lamport’s ideas about writing mathematical proofs are nature’s way of telling him how difficult it is to make mathematicians change their deeply ingrained habits.

Video of Lamport’s complete lecture – including slides

Markus Pössel schrieb (24 September 2014):

> […] separate modules […] which can execute certain calculations independently from each

other […] sending messages back and forth

> TLA, short for “Temporal Logic of Actions” – a formal language for specifying systems and

the way they can change […]

> His [Leslie Lamport’s] language TLA+ [ http://research.microsoft.com/en-us/um/people/lamport/tla/book.html ] allows users to specify systems by defining their possible states and the way these states can change

A language to describe

– the “sending of messages back and forth“; and

– applicable “certain independent calculations“, such as (foremost) the independent

determinations which messages had been received by whom in coincidence, and which not

?

And, based on that: a way to facilitate automated reasoning about relations (temporal, or otherwise) between the corresponding distinguishable “modules” (who exchanged messages between each other and who each carried out their individual calculations or judgements)

?

That would surely be nice; as anyone will attest who has struggled with the complexities of Robb’s “Geometry of Time and Space”, or with the coordinate-infestation in all-too-typical other treatments of the theory of relativity.

But it seems very doubtful that Lamport’s particular effort is up to this task.

Even his notion of “module” (cmp. chap. 17, “The Meaning of a Module”) does not seem

recognizable in the above sense;

and related salient notions(“event”, “participant”, “indication”, “coincidence”, “simultaneity”, … “duration”, …) do not even appear in the book’s Index.

Thoughtful commentary . Speaking of which , if people requires to merge PDF or PNG files , my boss encountered a tool here altomerge.com.