Artificial Intelligence and the Challenge of Modeling the Brain’s Behavior

Yesterday morning at the Heidelberg Laureate Forum (HLF) laureates Yoshua Bengio (2018 Turing Award), Edvard Moser (2014 Nobel Prize in Physiology or Medicine), and Leslie G. Valiant (1986 Nevanlinna Prize and 2010 Turing Award) each presented a lecture related to artificial intelligence or the modeling of the brain.

Yoshua Bengio’s lecture on “Deep Learning for AI” provided a retrospective of some of the key principles behind the recent successes of deep learning. Dr. Bengio’s work has mostly been in neural networks, which are inspired by the computation found in the human brain. One of the key insights in the field came with the representation of words as vectors of numbers. This allowed relationships between words to be learned (e.g. cats and dogs are both household pets) and these vectors did not need to be handcrafted.

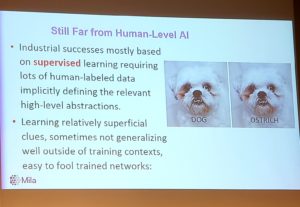

Unfortunately we are still far away from human-level AI. Many of the recent industrial success have mostly been based on supervised learning that requires lots of human-labeled data that implicitly defines the relevant high-level abstraction, but it is still easy to fool many of these trained systems with changes that would obviously not trick a real human being (see the slide to the right). Bengio suggested that advancements could be made with the further use of attention mechanisms. A standard neural network processes all of the data in the same way, but we know that our brains focus computation in specific ways based upon the previous context (i.e. it pays attention to what’s important). Applying attention mechanisms allows for more responsive and dynamic computation and might enable machines to do things that look more like reasoning.

Bengio also stressed the importance of focusing AI for social good. While AI can of course be beneficial or benign, it can also be abused and used to disenfranchised vulnerable groups. As an example, given during his later press conference, he warned that deep learning for facial recognition could be used in weapons that kill certain people or populations without a human in the loop. Bengio has been part of efforts to persuade governments and the UN to sign treaties preventing the use of such potential systems but so far there has been little traction.

When asked about the risks of AI displacing workers during the press conference, Bengio said that he believes the risk is there: it is likely there could be relatively rapid changes in the job market compared to the past and even if new jobs are created the old jobs will be destroyed too quickly for people, especially older populations, to retrain in time. He argued that a universal basic income (UBI) could potentially be one way to alleviate these impacts. However, even if UBI can solve these potential economic problems the wealth generated from high tech risks being concentrated in few wealthy countries, which could be dangerous because the negative costs of said technologies (in terms of job loss) could still occur in countries that cannot afford to bear those costs.

Still Bengio stressed that computer scientists should “favor ML applications which help the poorest countries, may help with fighting climate change, and improve healthcare and education.” He also highlighted the work of AI Commons, a non-profit that he co-founded that seeks to extend the benefits of AI to everyone around the world.

Edvard Moser’s lecture, “Space and time: Internal dynamics of the brain’s entorhinal cotex” focused on a study of mice that sought to understand the way in which mammals process spatial information in the entorhinal cortex and hippocampus. In mammals, specific place cells and grid cells (types of hippocampal neurons) fire when the animal is in a certain location. This firing enables synaptic plasticity thus encoding the position within the environment into the animal’s memory. Moser noted that grid cells (and place cells) have been reported in bats, monkeys, and humans suggesting they originated early in mammalian evolution. Further study in this area could reveal much about the way that human memory works, perhaps in ways that could benefit future AI systems.

Finally, Leslie G. Valiant discussed “What are the Computational Challenges for Cortex?” Valiant said that there are constraints on the brain that are not present in computing systems, such as sparsely connected neurons, resource constraints, and the lack of an addressing mechanism (for memory recall) Understanding these constraints are required for continuing to advance neuro-inspired computing techniques.

As examples Valiant described two types of human-based “Random Access Tasks”:

- Type 1 is allocating a new concept to storage (e.g. the first time you heard of Boris Johnson). “For any stored items A (e.g. Boris), B (e.g. Johnson), allocate neurons to new item C (Boris Johnson) and change synaptic weights so that in future A and B active will cause C to be active also.”

- Type 2 is for associating a stored concept with another previously stored concept. For example you want to associate “Boris Johnson” with “Prime Minister”. For an stored item A (Boris Johnson), B (Prime Minister) change synaptic weights so that in the future when A is active B is also active

Some aspects of the mechanisms of knowledge storage are now experimentally testable, and Valiant expects more algorithmic theories will become testable going forward.

Dear Mr. Douglas

A lot of topics which are discussed in the HLF-lectures are already solved!

I have developed a complete explanation model of the phenomenon ´Near-Death Exxperience´(NDE): In a NDE we can perceive as a conscious experience how a single stimulus(thought) is processed by the brain (order, contents). You can find a PDF-text in German language by Google-search [Kinseher NDERF denken_nte]. We perceive in NDEs a DIRECT ACCESS to the working brain – but up to now this is ignored by brain-/AI-scienists.

With NDEs it is possible to describe the brains´ processing method as a simple pattern matching activity by three simple rules. Additional we can recognize, how/why our brain is so effective and several attention mechanisms.

here some expamples:

> our brain works with an EXTERNAL body-scheme which is NOT part of our memories: thus the volume of the memories can have a very small amount

> our brain use PRIMING: the state of the brain prior to a new stimulus influence how this stimulus is processed: the computation is based on the previous context = usually the actual situation

> dependent on the situation/priming, our brain use two different strategies for a recall of memories: A) ASCENDING hierarchical order: since the 5th month of foetus age .. to our actual age. (This access to our brain is ignored up to now by brain reseach). B) DESCENDING hierarchical order: from our actual age .. back to the 4th-2nd year of childhood (this limited access to our brain is already known as ´infantile amnesia´)

> our brain use NO clock/watch to store experiences in the memory: our experiences are only STACKED UP in the memory – thus we have the illusion of temporal order

> all our experiences are perceived, stored and re-activated in PRESENT TENSE: thus we can use our recalled memories immediately on demand

> an EXPERIENCE is made of a) knowledge, b) body-, c) sensual-, d) immune-system-activity and e) emotions. Thus it is not possible to create an artificial AI-brain, because in AI we use only digits/numbers

> usually when we perceive a new stimulus, oir brain RE-ACTIVATE a comparable/identical experience from the memory. With the RE-ACTIVATION of experiences – this is like to acitvate a LINK in the internet – we can react immediately (this is a survival procedure: speed is more important than accurracy)

> here an expample for CREATIVITY: When we perceive the stimulus ABCD, then e.g. the brain can RE-ACTIVATE the experiences ABef + CDfg (in a sum: ABCDeffg). When we give every component a value ´1´, then the double-ff will get 2*1=2 !!! This is an emphasis, in compare to the other components.

> another reason for creativity and day-dreaming might be found in activating neuronal waves which move across the cortex. These waves might activate neurons – to produce thoughts.

In short: it would be a good idea to study the rules/structures/contents which we can perceive as a CONSCIOUS experience in NDEs. This direct access to the working brain is unique – and completely ignored by brain-researchers up to now!

additional information

A big problem for AI/robotics is – how to transform OLD knowledge into NEW information, when the situation has changed.

The brain has solved this problem very simple – by STATE DEPENDENT RETRIEVAL (= zustandsabhängiges Erinnern). Thus we can use OLD knowledge across our complete life-span – and transfer it into NEW experience, on demand.

Yoshua Bengio is a key figure in Deep Learning. Deep learning finds a correlation between large collections of input data frames and object categories. In fact, this is one of the many existing brain functions, namely to identify an object from noisy visual and auditory information created under very different conditions.

However, this is a long way from modelling brain functions, because everything that makes up higher brain functions, such as reasoning or the holistic orientation that puts the recognized object into a larger context, is missing.

If one were to mention a researcher whose real goal is the modelling of human thought and behaviour, one would not have to name Yoshua Bengio, but Joshua Tenenbaum, Professor of Cognitive Science and Computation at MIT, where he heads the Computational Cognitive Science lab at MIT. Tenenbaum says about deep learning: “intelligence is not just about pattern recognition”. Tenenbaum is convinced (quote) that “AI Needs a Common Sense Core Composed of Intuition. … Human common sense involves the understanding of physical objects, intentional agents and their interactions, which Tenenbaum believes can be explained through intuitive theories. This “abstract system of knowledge” is based on physics (eg. forces, masses) and psychology (eg. desires, beliefs, plans).”

Deep learning, as developed by Joshua Bengio, is only a small step towards common sense, real understanding of the world in which the subject moves, goals, plans and desires that the subject cherishes and pursues. Deep Learing is therefore a necessary but not sufficient step to create a world-competent being.