Accessibility: Transcriptions at the Heidelberg Laureate Forum

Thursday morning, at the Heidelberg Laureate Forum, text from a live transcription began to appear at the bottom of one of the main screens. Given the complexity of the topics under discussion, the transcription was remarkably faithful, almost all of the technical terms came out correctly, although “Gödel’s theorem” came out as “goods theorem”, and it was very, very fast.

We’ve heard a lot about various areas of artificial intelligence and Deep Learning at this year’s and also at previous HLFs. Was this an application? My curiosity piqued, I went to the organizers to find out. I learned that today’s transcription was a test, and it did make use of at least some network infrastructure. But the processing power behind it all came from two human brains: those of Jennifer Schuck and Teri Darrenougue, respectively. Jen is sitting in Arizona, Teri in California, and they transcribe the audio from the HLF lectures in alternating 15-minute shifts, listening in from the other side of the Atlantic via Skype.

How the transcripts are produced

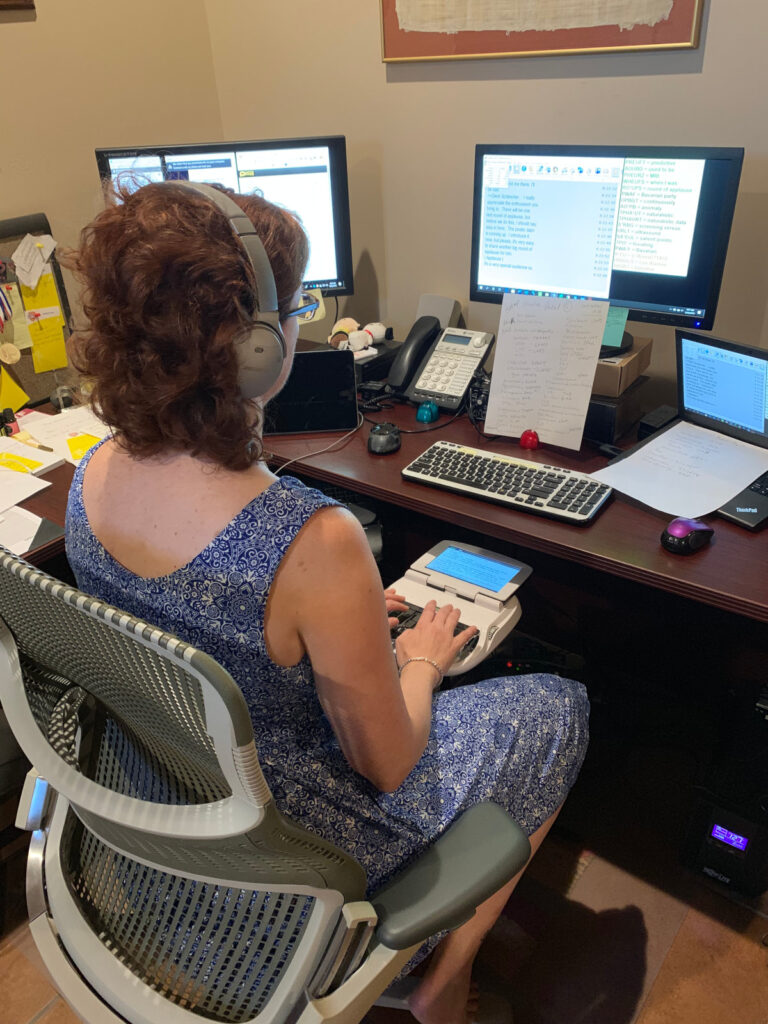

I was eager to find out how this worked and had the opportunity to interview Jen via, quite appropriately, text-based chat. The short answer is that Jen and Teri are international-level mental athletes. For the long answer, here is a view of Jen near the end of Thursday afternoon’s lecture by Shwetak Patel.

If you look closely at the screen, Dierk Schleicher has just finished the session and is introducing the flash presentations for the posters. Directly in front of Jen is the key piece of hardware: a stenography machine. Mechanical versions of such machines have been in use since the mid-19th century. Stenography machines are still in use to document court proceedings, or sessions of various parliaments – or, as in this case, produce live transcripts. (In fact, both Jen and Teri started out originally in the judicial system). The machine in front of Jen is an electronic version, which transfers its results directly to the computer in front of Jen and thence to the projection at the HLF.

Want to go faster? Just learn 80,000 abbreviations

Stenographic machines have fewer keys than regular keyboards. Jen’s model has 26 different keys. To compensate, the machine accepts combinations of multiple keys, pressed at the same time. Some combinations represent syllables, so the speech-to-text-reporter can type in directly what they hear. But that alone wouldn’t be fast enough. In order to reach up to 240 words per minute, as Jen does, one needs to use abbreviations – combinations of signs, which the software resolves into words or even phrases.

This is where it gets really impressive: Jen knows roughly 80,000 such abbreviations by heart, enabling her to write longer words or phrases with a single combination of keys. Teri and Jen have prepared an additional 300 such combinations directly for the HLF. “ST*GD“, for instance, stands for “stochastic gradient descent,” a rather uncommon word combination heard quite frequently in today’s morning lecture by John Hopcroft. For the more unusual words, she has an event-specific list in front of her as a memory aid. Where slides were available, the two prepared their transliteration based on the slides, and where they had no additional material, they did their own research on the topic of the talk. The more information they have, the better the captioning will be.

Medals, artificial intelligence, and numbers

When I suggest that such mental acrobatics is equivalent to high-performance athletics, Jen points me to Intersteno, the international meetings of stenographers, which includes competition. In fact, Jen won a bronze medal for short-hand speech capturing (stenotype machine category) at this year’s Intersteno in Cagliari, Sardinia, and a gold medal in the same discipline at the Intersteno in Berlin two years earlier.

In particular, given the progress in artificial intelligence and deep learning that is the subject of a number of this year’s HLF lectures, is Jen afraid she is about to be replaced by software? That, Jen says, has been hotly debated in her profession for the past 20+ years. At least for the foreseeable future, she believes that quality captioning will need to rely on humans – although maybe not in the same exact role as today. She hopes she will still provide quality captioning to large events well into the future, in some form or fashion.

Back to the present, though: For those HLF participants who are not native speakers of English, this Thursday’s transcripts certainly provide additional help. They would also help participants who are deaf follow the lecture (two years ago, we had specialist sign-language interpreters for that). In total, Jen and Teri transcribed a total of 225 minutes of lectures and talks this Thursday, amounting to 34,000 words. At least from what I have seen, this year’s test was a success – for better accessibility, I hope that means the organizers will introduce captioning for the whole of the 8th HLF in 2020!

Markus Pössel wrote (27. September 2019):

> Accessibility: Transcriptions at the Heidelberg Laureate Forum

> […] transcribe[d …] audio from the HLF lectures

Are transcription of the HLF lectures available to the public?

Are presentation slides of the HLF lectures available to the public?

Fantastischer Blogpost, Markus.

War wie immer schön, dich als Twitterstimme dabei zu haben.